Supercharging Asynchronous Performance: A Deep Dive into Python FastAPI and OpenAI API Optimization

Hey there! I'm a passionate Full Stack Developer with a knack for building scalable, high-performance web applications and solving intricate technical challenges. With a proven track record in both individual and team-led projects, I specialize in crafting robust solutions across diverse domains.

Currently, I co-run a development firm where I architect and implement state-of-the-art web applications using cutting-edge technologies like Next.js, React, Node and AWS. My experience spans across frontend and backend development, DevOps practices, and real-time communication systems.

Technical Arsenal:

- Frontend: Next.js, React, TypeScript, Socket.IO

- Backend: Node.js, Express.js, NestJS, Grpc

- Cloud & DevOps: AWS (S3, Lambda, CloudFront), Docker, Serverless, CI/CD (GitHub Actions)

- Databases: PostgreSQL, MongoDB, Redis

- Other Frameworks: Microservices Architecture, Frappe Framework

📝 Here, I write about:

- Web Development Best Practices

- System Design and Architecture

- Performance Optimization for Large-Scale Applications

- CI/CD and Deployment Strategies

- Cloud and Serverless Solutions

- Real-Time Communications (Voice/Video/Chat)

- Blockchain Integrations and Token Exchange Mechanisms

🌱 Currently Exploring:

- AI/ML Integrations in Web Applications

- Advanced Microservices Architecture

- Scaling Real-Time Applications for Millions of Users

🤝 Open to:

- Collaborations on challenging projects

- Technical consultations on scaling and optimizing applications

- Networking with like-minded developers

Let’s connect and create something extraordinary together!

#WebDevelopment #FullStack #React #AWS #DevOps #RealTime #CI/CD #SoftwareEngineering

Introduction

In the world of modern web applications, performance is king. Recently, while working on an AI-powered story generation project, I uncovered some critical insights into improving asynchronous programming techniques that dramatically reduced our application's processing time by 2.5 minutes.

The Initial Challenge

Our project involved a complex system with multiple components:

React Native mobile app

Node.js backend

Python FastAPI service

OpenAI API integrations for story and image generation

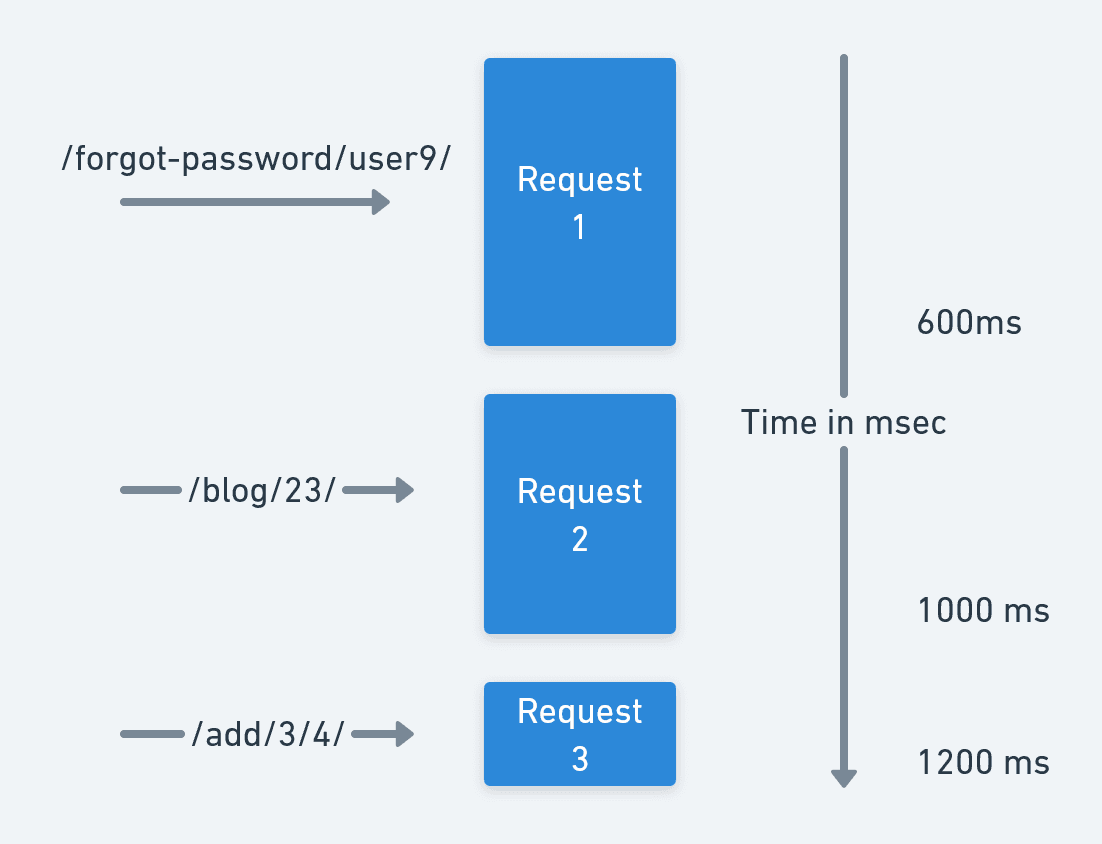

The initial implementation used ThreadPoolExecutor for concurrent tasks, which, while seemingly efficient, had hidden performance bottlenecks.

Understanding the Synchronous Bottleneck

The Problem with ThreadPoolExecutor

Each task occupied a thread even during I/O wait times

Context switching between threads created overhead

Not truly non-blocking for I/O-bound operations

The Async Advantage

Asynchronous programming offers a game-changing approach:

Yield control during I/O operations

More efficient resource utilization

Non-blocking execution model

Key Optimization Strategies

1. Async Image Generation

We transformed our image generation process using asyncio and aiohttp:

pythonCopyasync def call_image_generation_api(illustration_prompts):

async with aiohttp.ClientSession() as session:

tasks = [generate_image(session, prompt) for prompt in illustration_prompts]

results = await asyncio.gather(*tasks)

Benefits:

Concurrent API requests

Reduced processing time

Efficient resource management

2. Improved OpenAI Interactions

Leveraging the AsyncOpenAI client revolutionized our JSON generation:

pythonCopyasync def gpt_json(prompt, json_schema):

client = AsyncOpenAI()

response = await client.chat.completions.create(

model="gpt-4-turbo",

response_format={"type": "json_object"},

messages=[...]

)

Advantages:

Non-blocking API calls

Structured response generation

Enhanced performance

Performance Metrics

Our optimizations resulted in:

2.5-minute reduction in processing time

More responsive application

Improved resource utilization

Best Practices

Use native async libraries (

asyncio,aiohttp)Leverage connection pooling

Implement robust error handling

Use environment-based configurations

Recommended Libraries

aiohttpfor async HTTP requestsopenaiwith async client supporthttpxfor additional async capabilities

Conclusion

Asynchronous programming isn't just a technique—it's a performance philosophy. By embracing async patterns, we transformed our I/O-bound operations from sequential bottlenecks to concurrent powerhouses.

Learning Resources

Happy Async Coding! 🚀